ChatGPT is free to use, however ChatGPT Plus (GPT-4) costs $20/month. That is $24/month for EU + UK.

Europe and the UK have to pay $23.80/month due to taxes.

Trying to find out how much ChatGPT costs? Not sure what GPT Tokens are?

- In this guide, we’ covering all of this.

- Read our simple explanations for OpenAI‘s pricing to ChatGPT.

Let’s go.

How much does ChatGPT cost?

ChatGPT is 100% free to use. The paid version, Chat GPT Plus, costs $20 or $23.80/month, depending on your region.

Theoretically it is $20/month, but OpenAI adds taxes worth $3.80 on top of that, so the total is $23.80 USD every month.

There isn’t any ChatGPT annual subscription.

- Just monthly subscriptions.

- Paying for ChatGPT monthly for a whole year will cost you $288 after taxes.

- That would be $240 before taxes.

However, after taxes is the realistic price.

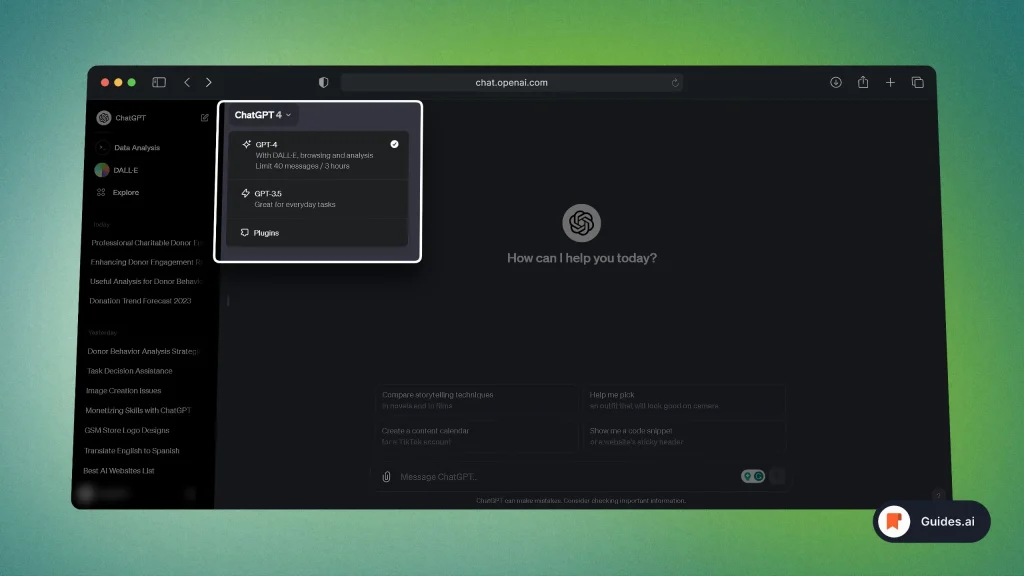

1. ChatGPT Plus

- ChatGPT Free: Uses the GPT-3.5 model

- ChatGPT Plus: Offers GPT-4 with various plugins.

Read our guide for all of ChatGPT’s models to understand the differences between free vs paid ChatGPT.

Relevant read: How to upgrade to ChatGPT Plus

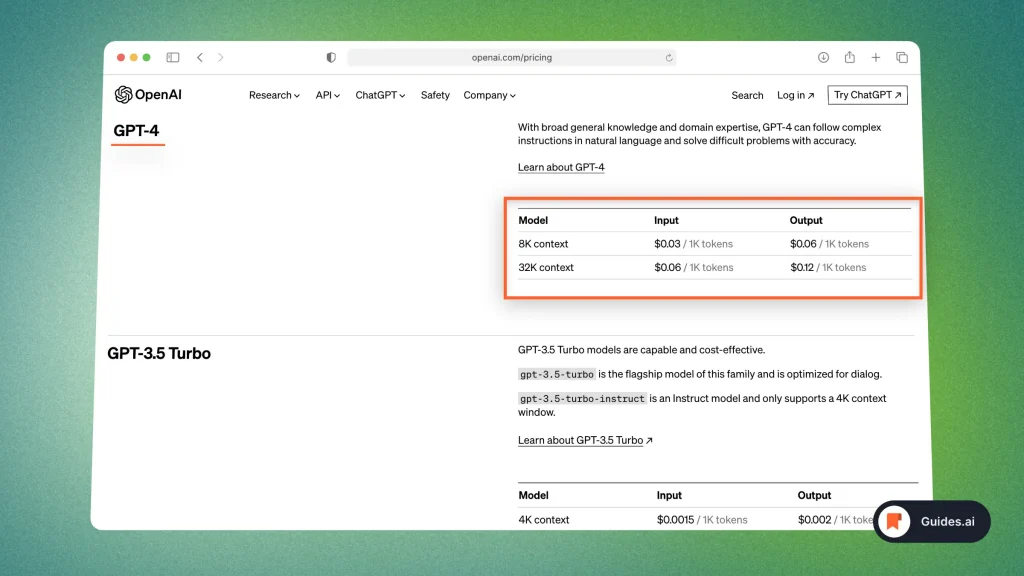

2. Tokens – API

- ChatGPT API costs $0.002 for every 1000 tokens used.

- This translates to a charge of 0.2 cents for every 1000 tokens.

- In comparison, GPT-3-davinci-003 charges $0.02 per token, which equals $20 for every 1000 tokens.

- ChatGPT’s pricing model is notably more cost-effective than GPT-3-davinci-003.

Read more about ChatGPT’s API in our other guide.

- What Tokens Are: Tokens can be as short as one character or as long as one word, and they are the building blocks of text.

- Input and Output Tokens: When you send a message to ChatGPT, the words in your message are counted as input tokens. The response generated by ChatGPT also consists of tokens, and these are counted as output tokens.

- Cost and Tokens: Many language models charge you based on the total number of tokens in both input and output. So, the longer your message and the response, the more tokens you’ll be billed for.

- Limitations: APIs often have token limits for each call. If your conversation exceeds these limits, you’ll need to truncate or shrink your text to fit.

- Managing Costs: To control costs, you can keep your messages concise and to the point. Shorter messages mean fewer tokens and lower costs.

Link to the official pricing: OpenAI Pricing Page

Our image above is from September 2023.

We’ll update it as pricing will change, however OpenAI’s page is 100% up to date at all times.

Shortlist: Understanding ChatGPT’s Pricing

- Pricing Model: ChatGPT pricing typically depends on usage. You pay based on the number of tokens generated.

- Tokens: Tokens are chunks of text. In English, a token can be as short as one character or as long as one word.

- Free Tier: Some services offer a free tier with limited tokens, like OpenAI’s GPT-3.

- Pay-as-You-Go: For heavier usage, you’ll move into a pay-as-you-go model.

- Pricing Per Token: You’re charged for each token generated, including input and output.

- Variable Costs: Different API providers may have varying per-token costs.

- Estimating Costs: To estimate costs, calculate the tokens in your input message and received response. Add them together.

- Control Costs: You can control costs by managing the length of your messages. Shorter input and output means fewer tokens and lower costs.

- Monitoring Usage: Regularly check your usage to avoid unexpected charges.

- API Pricing: Keep an eye on the pricing specifics provided by the API you use.

Conclusion

There you go. You’ve just learned everything about ChatGPT’s pricing!

While it is a powerful and free tool, you can opt for the paid version for more benefits.

Learn how to become more productive with our guides on how to use AI.

Thank you for reading this,

Ch David and Daniel